|

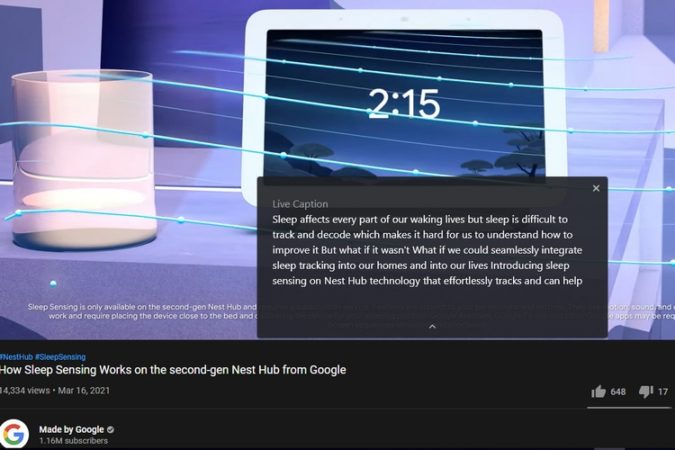

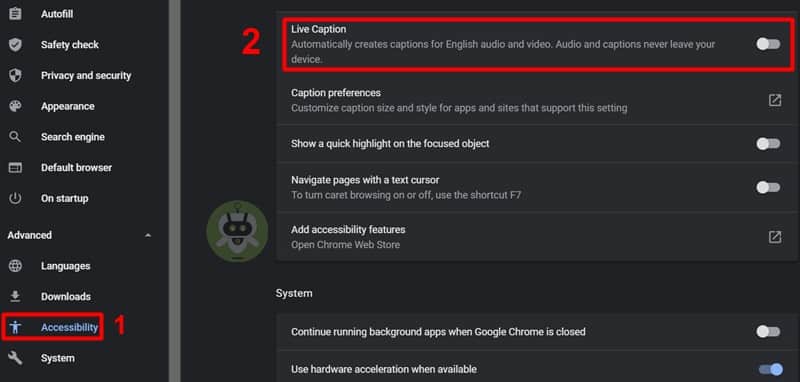

11/29/2023 0 Comments Google live captionIt considers both the natural language understanding (NLU) aspect as well as the ergonomic aspect (display, layout, etc.) of the user experience in deciding when and how to produce a stable updated text.

To improve the stability of live captions, we propose an algorithm that takes as input already rendered sequence of tokens (e.g., “Previous” in the figure below) and the new sequence of ASR predictions, and outputs an updated stabilized text (e.g., “Updated text (with stabilization)” below). Illustration of the flicker between two non-identical frames. Illustration of the flicker metric between two identical frames. It is worth noting that higher values of the metric indicate high flicker in the video and thus, a worse user experience than lower values of the metric. Finally, we average over all of the frame-pairs to get a per-video flicker.įor instance, we can see below that two identical frames (top) yield a flicker of 0, while two non-identical frames (bottom) yield a non-zero flicker. We then sum over each of the low and high frequencies to quantify the flicker in this pair. Thus, for each pair of contiguous frames, we convert the difference in luminance into its constituting frequencies using discrete Fourier transform. However, converting the change in luminance to its constituting frequencies exposes both the obvious and subtle changes. Gold is nice”) may be difficult to discern for readers. Large visual changes in luminance are obvious (e.g., addition of the word “bright” in the figure on the bottom), but subtle changes (e.g., update from “. We achieve this by comparing the difference in luminance between individual frames (frames in the figures below) that constitute the video.

Specifically, our goal is to quantify the flicker in a grayscale live caption video. Inspired by previous work, we propose a flicker-based metric to quantify text stability and objectively evaluate the performance of live captioning systems. Furthermore, it shows that our proposed stabilization techniques significantly improves viewers' experience (e.g., the captions on the right in the video above). Our statistical analysis demonstrates a strong correlation between our proposed flicker metric and viewers' experience. Finally, we conducted a user study (N=123) to understand viewers' experience with live captioning. Second, we also introduce a stability algorithm to stabilize the rendering of live captions via tokenized alignment, semantic merging, and smooth animation. First, we quantify the text instability by employing a vision-based flicker metric that uses luminance contrast and discrete Fourier transform. In “ Modeling and Improving Text Stability in Live Captions”, presented at ACM CHI 2023, we formalize this problem of text stability through a few key contributions. This can cause text instability (a “flicker” where previously displayed text is updated, shown in the captions on the left in the video below), which can impair users' reading experience due to distraction, fatigue, and difficulty following the conversation. However, to maintain real-time responsiveness, live caption systems often display interim predictions that are updated as new utterances are received. really, really grates on me and is inherently unfair.Posted by Vikas Bahirwani, Research Scientist, and Susan Xu, Software Engineer, Google Augmented RealityĪutomatic speech recognition (ASR) technology has made conversations more accessible with live captions in remote conferencing software, mobile applications, and head-worn displays. (The fact that I have to beseech a privately owned company for disability access so I can participate in other's meetings using their widely used software. I hope Zoom management will read this comment and consider allowing participants unrestricted access to CC.

It is simply not OK to deny deaf users access to automatically generated captions when it can be available all the time, regardless of leader settings. It is simply not OK to pull the rug like this on deaf users. Google is at fault for disabling Live Captions in this context. If one is a participant, one must depend on the leader enabling the captioning there is no client-side captioning ability available if the leader has not enabled on their end.Ģ. If one is a leader, server-side (not just client-side) settings have to be enabled for automatic captioning availability. (Unless you are deaf, you really can't understand how disruptive this is.) Rome1's comment helped me understand that others have observed Google Live Captions failing to work. At some point in the recent past, this failed to work, causing me a bit of disruption. I am deaf and had been relying on the web interface to Zoom in conjunction with Google's Live Captions feature.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed